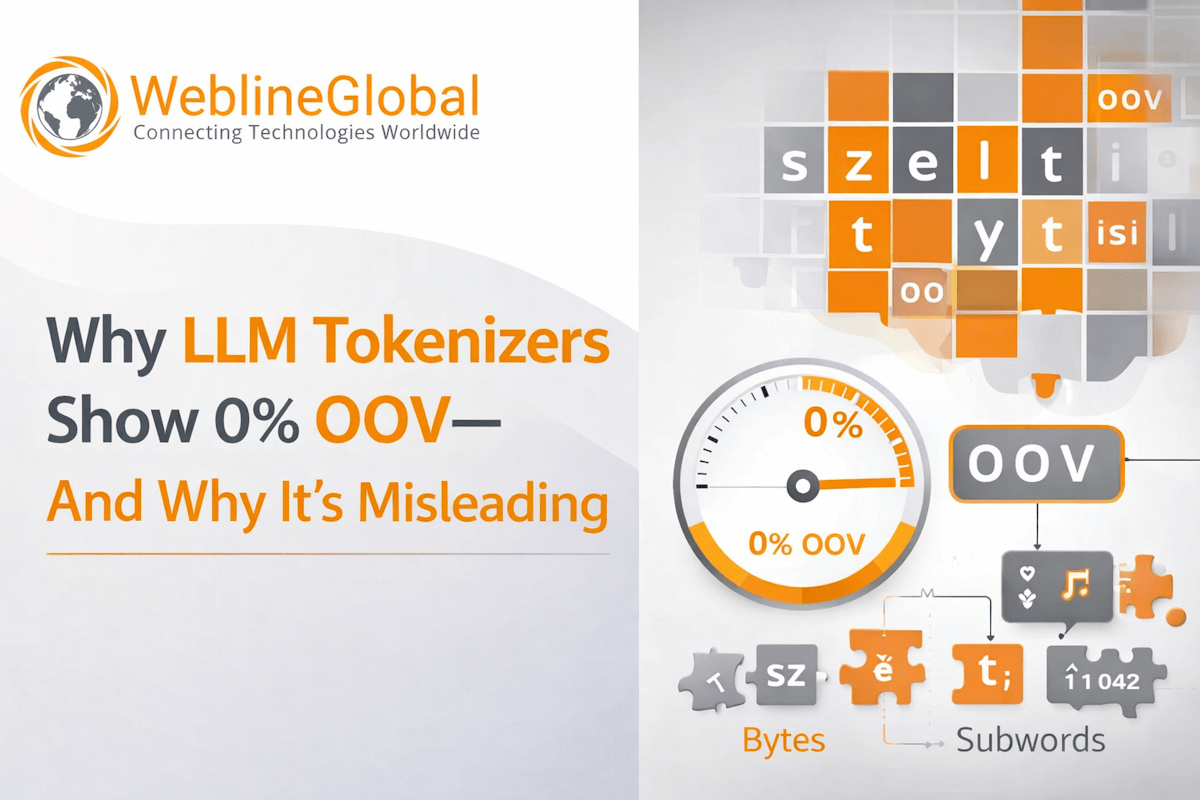

Why LLM Tokenizers Show 0% OOV — And Why It’s Misleading

Measuring Out-of-Vocabulary (OOV) rates in modern LLMs often yields a puzzling 0.00%. Explore a real-world multi-lingual NLP project where evaluating BPE and SentencePiece tokenizers revealed the limitations of traditional UNK token tracking, and learn how fragmentation metrics offer a truer picture of AI model performance.