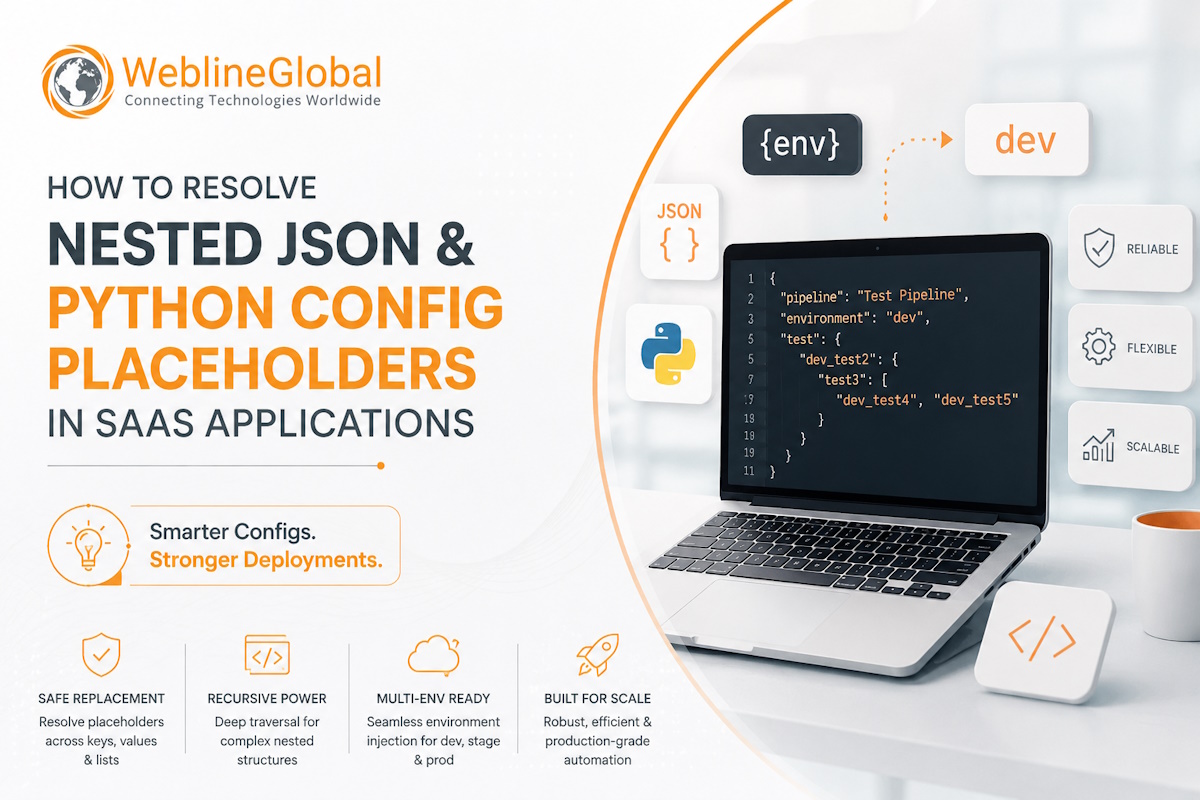

How to Resolve Nested JSON & Python Config Placeholders in SaaS Applications

During a recent multi-tenant SaaS deployment, our team faced a challenge injecting environment variables into deeply nested Python configurations. Discover how we moved beyond basic recursion to robustly handle complex JSON dictionaries and lists, ensuring seamless dynamic provisioning across enterprise infrastructure environments.